Anthropic recently revealed what appears to be a large-scale, state-sponsored cyber-espionage campaign using agentic AI. A hacking group used Anthropic’s Claude Code, their AI models, and a series of Model Context Protocol (MCP) servers to automate a large-scale cyberattack that included:

- Network mapping: Rapidly scanned target environments to locate high-value databases, credential stores, and privileged systems.

- Credential hunting: Researched vulnerabilities on the fly, wrote and executed custom exploit code to harvest usernames, passwords, session tokens, and API keys, and created persistent backdoors.

- Data exfiltration: Identified, extracted, and categorized sensitive private data by intelligence value, with a focus on high-privilege accounts and crown-jewel assets.

- Post-exploitation documentation: Autonomously generated detailed reports of stolen credentials, system reconnaissance, successful techniques, and recommendations for future operations.

What made this even more alarming is that all of this ran in autonomous loops, achieving 80–90% automation, executing multiple tool calls per second across a series of MCP servers, with almost no human intervention after initial targeting — made possible by a jailbroken framework that consistently bypassed Claude’s safety guardrails.

Questions Every Enterprise Needs to Ask

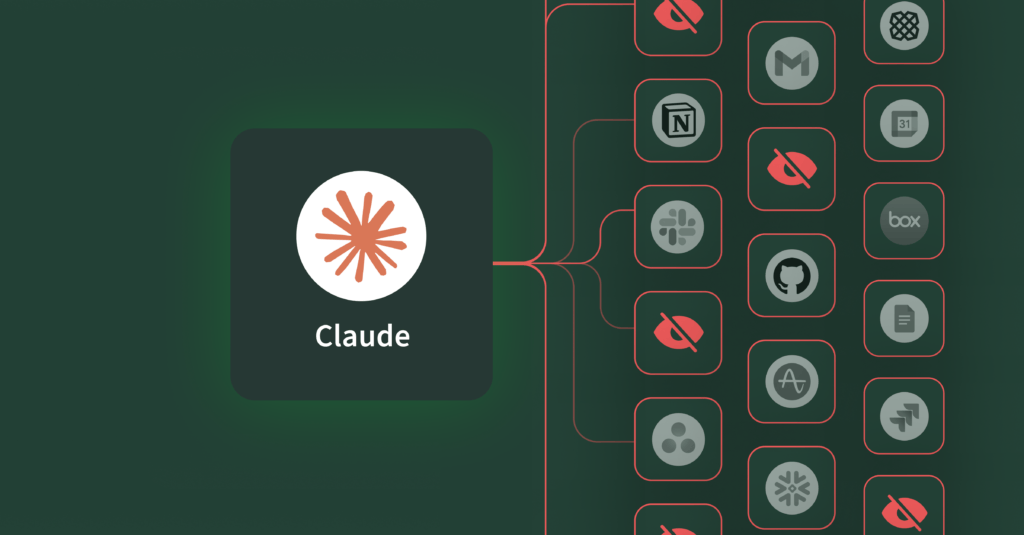

What makes this Anthropic espionage incident stand out is how seamlessly the AI handled the entire operation autonomously, across multiple steps, with speed and precision, bypassing traditional security systems. And inside the enterprise, the same risk pattern already exists. While many employees connect AI agents to official, vendor-supported MCP servers from Salesforce, Notion, Asana, GitHub, or Box, they also frequently tap into third-party servers spun up by individuals or research projects that have not been vetted by the IT and security team. A single connection to an untrusted MCP server can cascade into unauthorized actions, data exfiltration, or compliance violations—especially when AI agents orchestrate actions across multiple business systems simultaneously.

This raises some critical questions for every enterprise: Are we ready for AI agents operating without strong security controls and executing tool calls across multiple MCP servers faster than we can monitor them?

If not, we need to understand how we can detect these AI attacks much earlier and limit the blast radius.

The Real Problem: Autonomous AI Without Right Security

This rapid adoption of AI agents and MCP is exposing a new set of security challenges that most enterprises aren’t prepared for:

- Visibility is practically non-existent: Most organizations have no idea how many AI applications are running in their environment right now. Which MCP servers are they accessing? What tools do they have available, and what data are they touching? Who’s responsible when something goes wrong? It’s a complete blind spot.

- The attack surface just exploded: With MCP becoming the de facto standard for connecting AI agents to business systems, we are essentially creating thousands of new pathways into our most critical data. Each MCP server is another potential entry point for an attack. Each tool call is another opportunity for exploitation and exfiltration.

- Shadow AI is the new Shadow IT—but worse: Remember when employees were spinning up their own cloud services without IT approval? Now they are deploying their own MCP servers and AI agents that can autonomously access, modify, share, and delete data across multiple business systems. The difference? Loosely managed MCP servers and agents can be more susceptible to attacks.

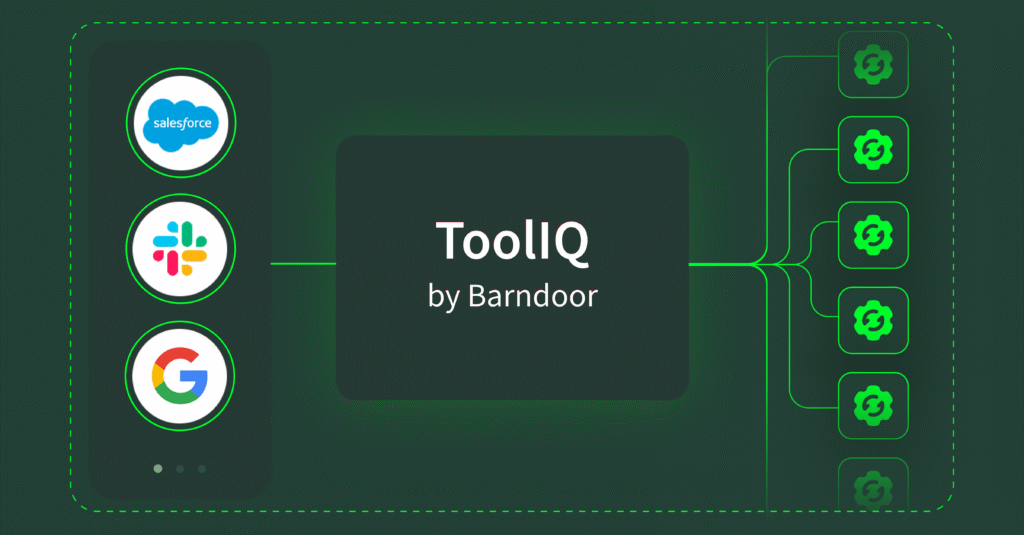

- Legacy security tools are outmatched: Traditional IAM solutions and API gateways were designed for human and API interactions, not for AI-to-data interactions. Your API gateway thinks AI-to-data interactions are just API calls. Your IAM system extends user-level permissions to AI agents, giving them excessive permissions and excessive autonomy, which are not appropriate for AI.

- Access control is fundamentally broken for AI: Without fine-grained, AI-specific access controls—down to the user, role, tool call, and business action—agents can read, modify, and delete sensitive data across systems with no centralized oversight.

The uncomfortable reality is that every day you are deploying AI agents or using MCP servers without proper governance, you are essentially installing backdoors into your own enterprise.

Introducing the Strategic Guide for AI & MCP Security

Our new Strategic Guide for AI & MCP Security breaks down exactly how AI agents fundamentally change your enterprise risk profile and provides actionable recommendations for mitigating those risks before they become breaches.

Inside, you’ll find:

- A clear breakdown of how MCP is transforming enterprise AI operations—and why that transformation creates new security vulnerabilities

- The specific risks that emerge when AI agents operate across multiple business systems simultaneously

- Practical recommendations for implementing AI-native security controls that actually work.

Final Thought

As AI becomes increasingly capable, autonomous, and integrated into core business systems, it’s also becoming increasingly attractive to threat actors. Even without malicious intent, the risks are real: employees inadvertently exfiltrating sensitive data, AI agents deleting critical records, or automated workflows triggering unintended cascading changes across systems. Add bad actors into the equation—who see AI agents and MCP as new attack vectors for exploitation—and the stakes become even higher.

The question is no longer whether enterprises will adopt AI agents and MCP servers—it’s whether they’ll deploy them with proper governance before something goes wrong. Most organizations are still experimenting with prompts and tool connections, but real value comes when AI can take safe, contextual action. Building AI-native controls today ensures you can detect issues faster, contain threats sooner, and keep agents operating within safe, predictable boundaries as both adoption and adversarial tactics evolve.