Table Of Contents

Description

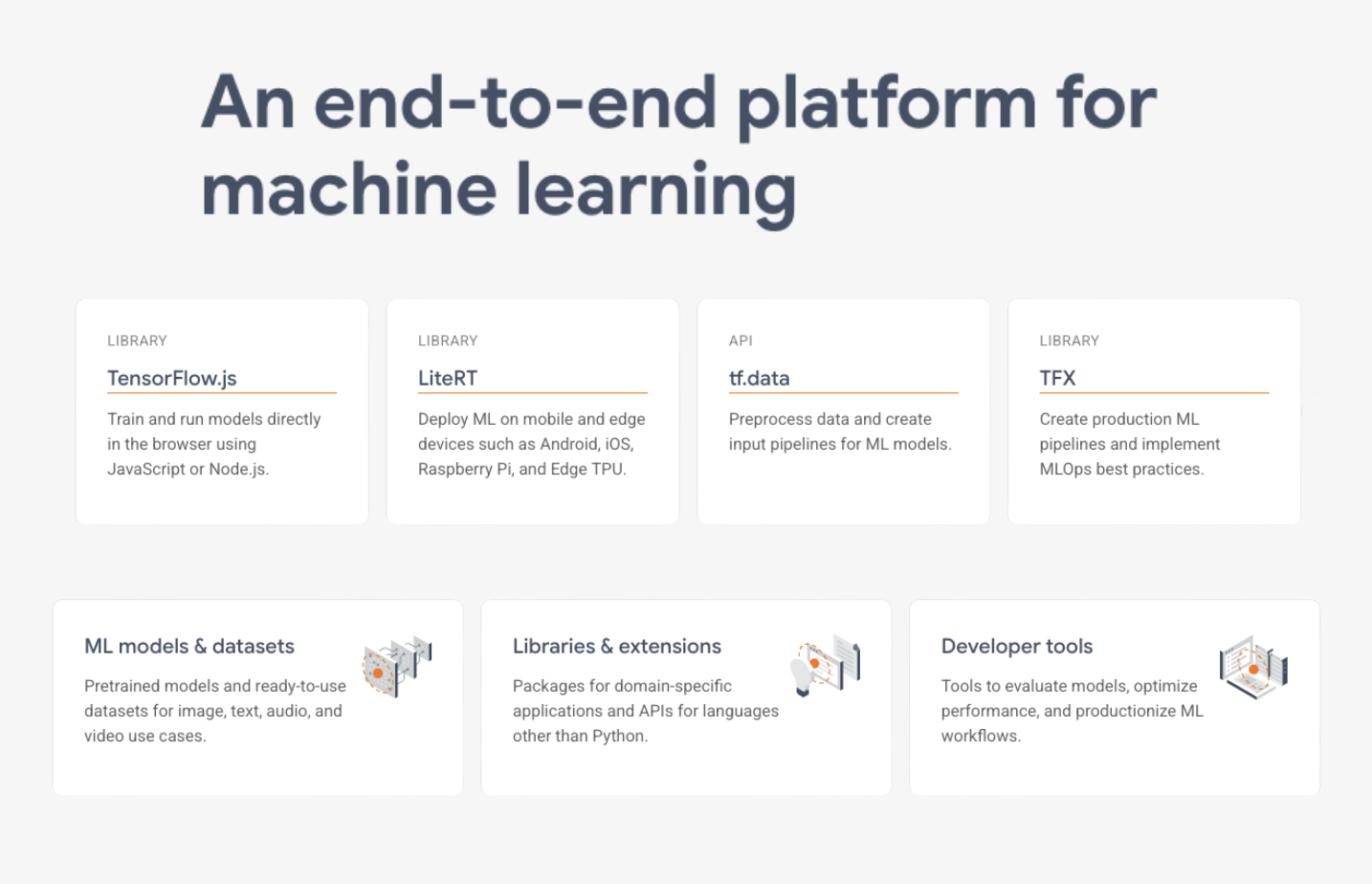

TensorFlow helps engineers and researchers build and run machine learning models at scale. Its graph-based system turns math operations into optimized plans that run across CPUs, GPUs, or Google’s TPUs using a built-in compiler and distributed runtime. Developers can build models using simple tf.keras APIs or dive into lower-level controls. The framework includes tools for automatic gradient calculation and flexible data pipelines for loading and batching training data. Models can be deployed with TensorFlow Serving for production, TensorFlow Lite for mobile, or TensorFlow.js for the browser. TensorBoard gives real-time insights into training progress, model structure, and performance across distributed systems.

Customers

What Problem Does TensorFlow Solve?

Companies face significant challenges building and deploying machine learning models due to fragmented tools, differing environments, and the need for specialized infrastructure and languages. This complexity leads to months-long delays getting AI features to market and requires expensive specialized teams. TensorFlow provides a unified platform that handles the entire machine learning workflow—from initial model building to deployment across web, mobile, and cloud systems—using familiar programming languages like Python and JavaScript.

Pros

- Industry-Standard ML Framework:

TensorFlow offers a comprehensive ecosystem for deep learning, supporting model building, training, deployment, and edge inference. - Cross-Platform Portability:

Runs on CPUs, GPUs, mobile, and embedded devices with support for TensorFlow Lite and TensorFlow.js for flexible deployment. - Strong Community and Support:

Maintained by Google and backed by a large open-source community, with extensive documentation, tutorials, and ecosystem tools.

Cons

- Steep Learning Curve:

Mastering TensorFlow’s architecture, graph paradigms, and optimization APIs can be challenging for newcomers. - Performance Tuning Demand:

Achieving optimal resource usage often requires in-depth profiling and manual parameter adjustments. - Ecosystem Fragmentation:

Using different TensorFlow components (like Keras or tf.function) can create compatibility issues, making long-term projects harder to maintain.

Last updated: September 9, 2025

All research and content is powered by people, with help from AI.