Table Of Contents

Description

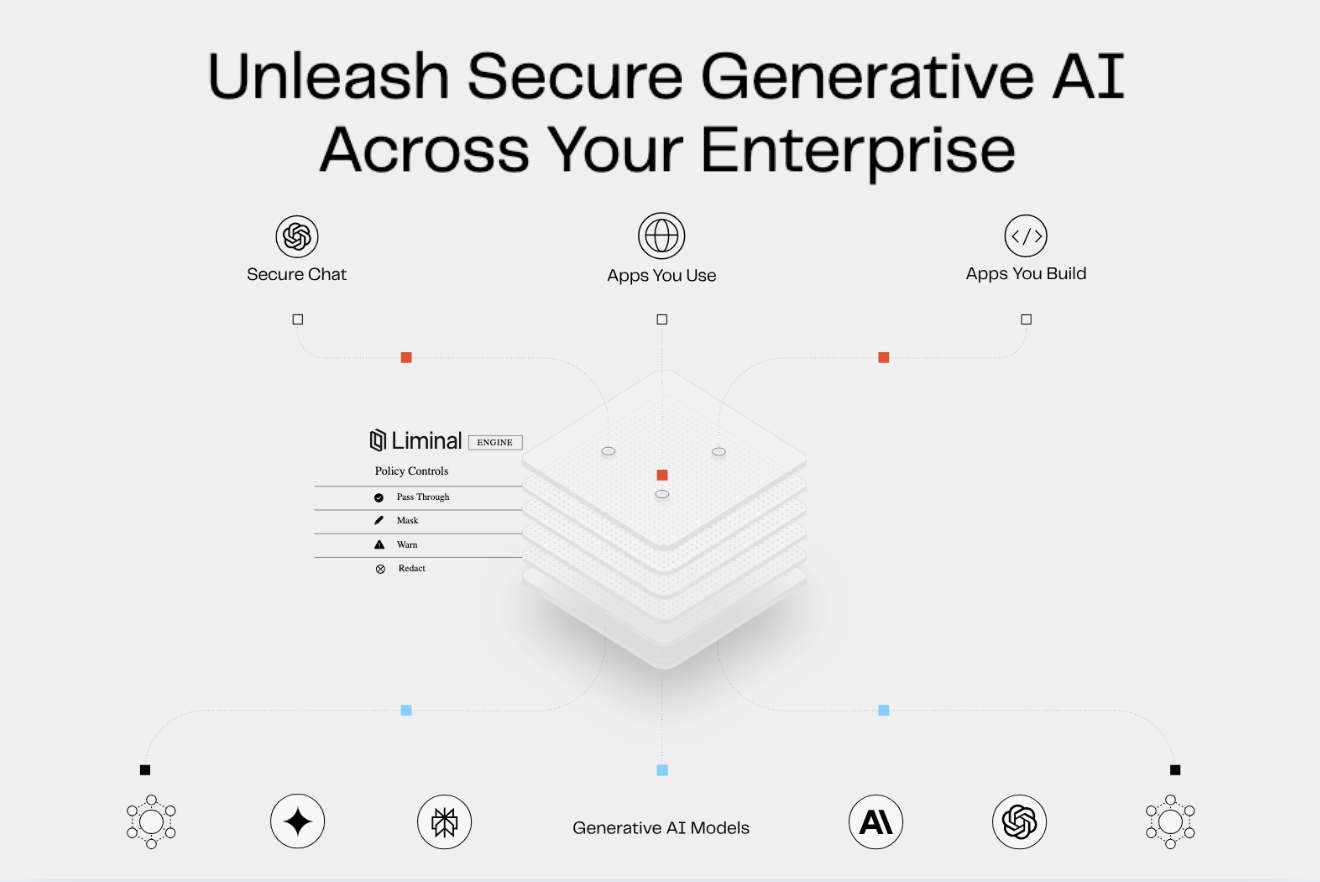

Liminal is an enterprise security platform that enables the safe adoption of generative AI by acting as a centralized control point for all AI interactions. Its core engine automatically intercepts employee prompts to any model, first detecting and classifying sensitive data like PII, PHI, or internal IP. Based on granular, administrator-set policies, it then protects that data by masking or redacting it before the query leaves the corporate environment. Upon receiving a response, the platform intelligently "rehydrates" the protected terms, ensuring a seamless user experience. This provides security and compliance teams with total oversight through a central hub for policy management, observability, and full compliance auditing.

What Problem Does Liminal AI Solve?

Employees at regulated companies want to use LLMs and other AI tools for daily work, but IT teams can't allow it because sensitive data could leak to third-party AI providers. This creates compliance violations, security breaches, and productivity bottlenecks as workers either go without AI tools or use them unsafely. Liminal provides a secure tools and methods that lets employees access AI models anywhere they work while ensuring all data stays protected and monitored.

Pros

- Centralized AI Governance:

Provides a single control point for security teams to set, enforce, and monitor security policies across all generative AI tools used in the organization. Real-Time Data Loss Prevention (DLP)

Cons

- Development Complexity:

Building cross-agent workflows requires careful architecture design, prompt engineering, and operational oversight. - Tooling Fragmentation Risk:

Integrating diverse agents and external APIs can introduce maintenance challenges and increase system brittleness. - Limited Market Maturity:

As an emerging platform, Liminal may lack widespread adoption, third-party support, or prebuilt enterprise templates.

Last updated: September 14, 2025

All research and content is powered by people, with help from AI.