Table Of Contents

Description

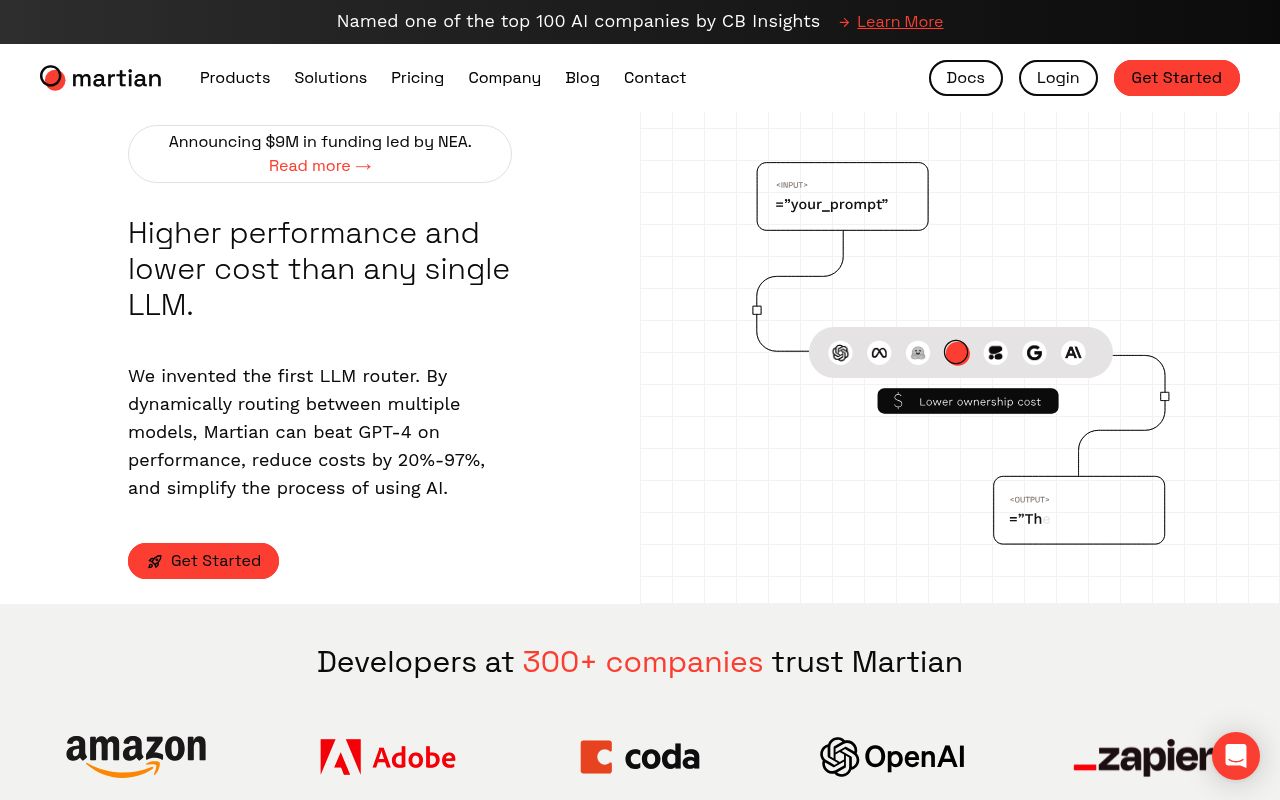

Martian's routing engine analyzes incoming prompts and dynamically selects from hundreds of LLMs based on each model's demonstrated strengths for specific query types, processing requests through a unified API that requires only single-line code changes from existing OpenAI or Anthropic integrations. The system continuously learns from user feedback and performance metrics to refine routing decisions, while Airlock compliance scanning evaluates new models against enterprise security policies before adding them to the routing pool. Development teams integrate through REST APIs and Python/JavaScript SDKs that provide automatic failover, cost optimization, and real-time model performance analytics across multi-provider LLM deployments.

Customers

What Problem Does Martian Solve?

Companies running AI applications struggle to choose which AI model to use for each task, often defaulting to expensive premium models for everything. This drives up costs by 10-100x while delivering inconsistent results since different models excel at different types of requests. Martian's router automatically sends each request to the optimal AI model based on the task requirements, reducing costs by up to 99% while improving overall performance quality.

Pros

- Interpretable Model Router:

Transforms transformer behavior into programmatic logic to enable transparent and controllable AI that improves trust and auditability. - Cost‑Performance Optimization:

Dynamically routes requests to optimal open‑source or commercial LLMs, often reducing latency and cost by 20–97%. - Research‑Backed Engineering:

Developed from mechanistic interpretability research with backing from leading VCs and enterprise customers across 300+ companies.

Cons

- Infrastructure Overhead:

Deploying and maintaining the model router and interpretability tools demands engineering resources and dedicated talent. - Open‑Source Ecosystem Risk:

Heavy reliance on open‑source model updates exposes deployments to variability in quality, compatibility, and version stability. - Emerging Market Constraints:

As a specialized startup, Martian may have limited plug‑and‑play integrations compared to larger LLM orchestration platforms.

Last updated: September 8, 2025

All research and content is powered by people, with help from AI.