Table Of Contents

Description

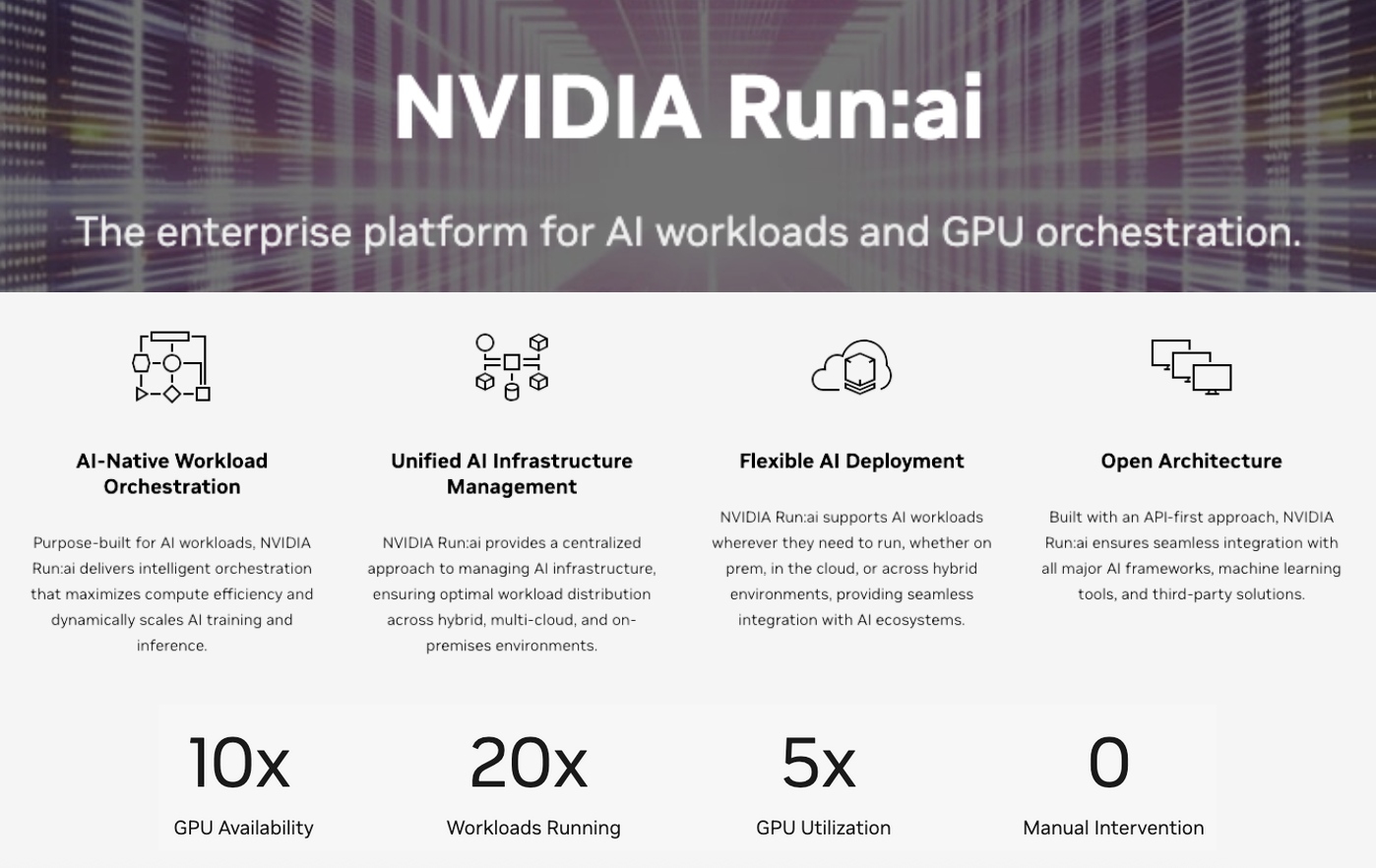

Run:ai’s orchestration platform helps teams manage GPU resources more efficiently for AI workloads. Built for Kubernetes, it creates virtual GPU pools that support fractional allocation, job preemption, and auto-scaling based on real-time demand. The system intercepts container scheduling and applies smart queuing to keep jobs running smoothly, even across large, multi-node workloads. Priority settings and gang scheduling help maintain SLAs. ML teams can deploy with kubectl or through a web UI with cluster dashboards. The platform also integrates with tools like MLflow, Weights & Biases, and Jupyter to track experiments and manage compute usage across shared infrastructure.

Customers

What Problem Does Run:ai (NVIDIA) Solve?

AI teams face challenges in sharing costly GPU resources across multiple projects and users, often resulting in inefficiencies and underutilized hardware. This leads to idle hardware costing thousands per day and delayed model training that can push product launches back months. Run:ai automatically allocates and scales GPU resources based on real-time demand, ensuring maximum utilization while giving teams the computing power they need when they need it.

Pros

- GPU Resource Optimization:

Run - Kubernetes-Native Integration:

Seamlessly embeds within Kubernetes clusters, enabling CI/CD-style orchestration for training and inference. - Collaboration via Virtual Queues:

Provides isolated yet shareable GPU queues for teams, enhancing resource governance and transparency.

Cons

- Scheduler Complexity:

Efficient GPU scheduling and partitioning require cluster administration know-how and fine-tuning. - Platform Dependency:

Designed for Kubernetes–based NVIDIA GPU environments, limiting suitability for non-K8s or multi-cloud setups. - Usage Cost Variability:

GPU consumption may surge under dynamic workloads, making budgeting and forecasting more challenging.

Last updated: July 29, 2025

All research and content is powered by people, with help from AI.