Enterprises winning at AI are building an approach to governance designed for how MCP-connected agents work

Enterprises using AI agents through Model Context Protocol (MCP) need new zero trust models. Why? Because AI agents don’t behave like traditional identities, especially within an MCP context. They discover tools, connect actions, and move fast. This piece explores how MCP reshapes security fundamentals and how organizations can extend zero trust principles to govern AI agent actions.

Here’s the reality – your teams aren’t waiting for official AI security policies. Marketing teams are using AI agents to analyze campaign performance, route marketing leads, and draft social content. Finance teams use agents for data analysis and reporting. Developers deploy agents that access repositories, vibe code, and push updates.

What’s making much of this possible is MCP, which provides an open standard for how AI agents connect to your enterprise systems and gives access to multiple tools without needing custom integrations. It’s a compelling business case. Since introducing it in 2024, Anthropic reports that there are now more than 10,000 active public MCP servers, covering everything from developer tools to Fortune 500 deployments. Forrester predicts that 30 percent of enterprises will deploy their own MCP servers by this year.

The promise of AI agents that can handle complex, multi-step workflows leveraging a rich dataset via MCP is exciting. Even so, the same reasons that make AI so beneficial also make them risky. The challenge now isn’t just about access but governing which actions agents can take once they gain access. This is the scenario zero trust attempts to address – never trust, always verify, and enforce least privilege. While still very much relevant, zero trust needs to evolve for how AI operates through MCP.

AI agents work differently through MCP. Zero trust must keep up

Zero trust validates access at five control points – identity, devices, network, applications, and data. A human user, for example, authenticates at 9 am, accesses a predictable set of resources throughout the day, and logs out at 5 pm. Their role doesn’t change in the middle of the session and their behavior is generally governed by human speed and intent.

AI agents operating through MCP challenge this model. In an MCP-connected world, AI agents don’t just request access to your company tools and data, they discover and take action across multiple tools and datasets.

Consider a marketing AI agent authorized to post social media updates to your company’s accounts. The agent can authenticate to X, Instagram, and LinkedIn through approved APIs and publish content on behalf of your brand. So far so good.

But when that agent connects to an MCP server, “post access” opens the door to more tools and resources within your marketing and sales stack. The agent connects these tools together – it pulls your enterprise customer list, finds their X handles, writes personalized posts mentioning them by name, generates images, and schedules everything to go live.

Essentially, the agent connected dots you never intended to be connected. Suddenly, “post to social media” becomes much more than simply publishing human pre-approved content.

The MCP pillar within a zero trust architecture

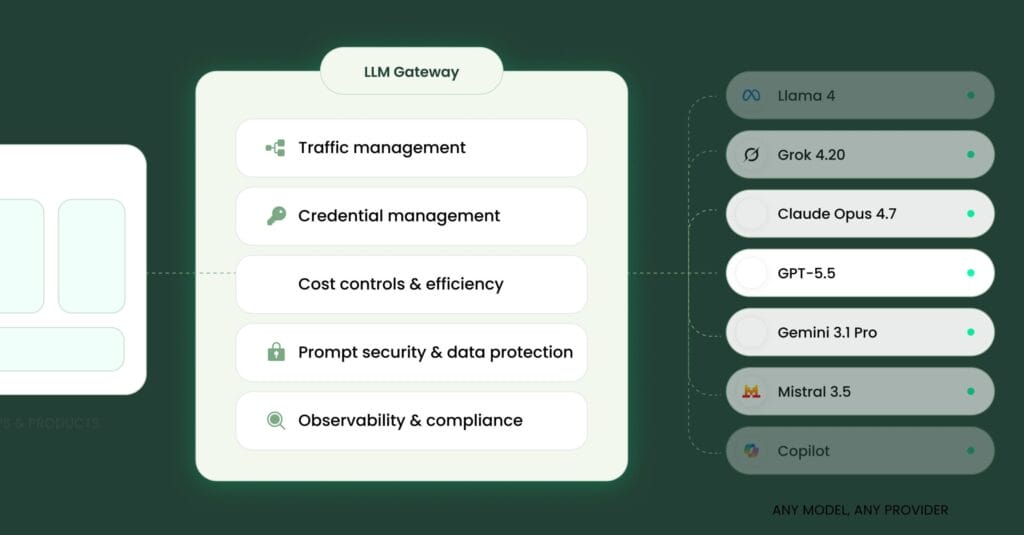

The solution isn’t blocking AI adoption or trying to adapt existing identity and access management security for agent workflows. It’s extending zero trust principles to operate at the MCP layer because if we need action-centric controls and continuous monitoring, then we need a place where both are possible.

This requires applying familiar security concepts in new ways:

Never trust, always verify continuously

Today, zero trust validates at login, request, or connection.

In contrast, AI agents compose actions dynamically. An agent that authenticated with valid credentials this morning might discover new tool combinations later in the afternoon that create risk. The agent authorized to “read customer data” might discover it can also export that data, cross-reference it with external APIs, and publish summaries. This workflow is technically within its permissions, but combining into outcomes humans did not intend. Security within MCP environments need continuous monitoring of agent behavior and verification throughout the session.

Least privilege applied to identities and agent actions

Today, least privilege dictates that identities get only the minimum access to data needed, nothing more.

MCP least privilege takes into account which resources exist and how agents connect them. When an agent connects to an MCP server, it discovers available tools before accessing underlying resources. As a result, the future of zero trust needs to extend to how these resources can be combined and the overall sequence of agent actions.

Assume breach, monitor for authorized tools used in unauthorized ways

Today, breach detection looks for unauthorized access.

With AI agents, the risk now includes authorized tools used or combined in unintended ways. Monitoring determines what normal and authorized agent activity looks like and detects when an agent attempts something out of scope before it happens.

Enable growth without losing control

Forward-thinking enterprises are reevaluating long-trusted security principles and architectures. They’re looking at how to enable AI agents safely by applying security at the right layers, where agents follow workflows and access resources. Here’s how these organizations are taking control:

They apply business context and security controls at the MCP layer

Enterprises getting this right deploy security that understands MCP protocols. These systems can see when an agent connects to an MCP server, which tools it discovers, and which ones it requests to use.

They connect permissions to business context, not just roles

An agent creating and posting social media content needs different tool access from an agent processing competitive intelligence. Effective agent governance ties permissions to specific business processes and the people using them.

They monitor in real-time

Enterprises enabling AI safely implement real-time guardrails that monitor what agents are doing with their access. When an agent takes action and tries something unexpected, the system can block that action, require another point of human approval, or trigger an alert.

They build confidence through visibility

Enterprises that are building confidence in their AI strategy maintain oversight through audit trails that show what happened, which agent accessed which data, which tools were used, which actions were approved or blocked, and more.

They enforce policy where agent actions take place

Traditional security reacts after agents have already initiated workflows. MCP-layer policy enforcement flips this around. Decisions about what an agent can do happen when the request is being made, before action begins. When policy enforcement happens at the traffic layer, you can intervene before workflows execute, not just audit them afterward.

They capture value across their teams

When you handle governance well, you’re not just preventing bad workflows, but encouraging good ones. Without visibility into how agents are being used, you’ll never know about your team’s AI wins – the engineer that automated a reconciliation process, the customer support team that built an agent to resolve support tickets. Great governance reveals what’s working, measures the impact, and empowers teams to safely experiment and celebrate successes.

They treat AI as a business imperative

Our CEO and founder Oren always puts it like this: “Treat AI like a business. Know the business use and the business context. Control access by both identity and action.”

By extending zero trust principles to the MCP layer, enterprises can enable AI agents safely, maintaining both governance and security while still capturing productivity gains. As MCP adoption accelerates today and beyond, this isn’t just a future directive, but a competitive advantage.

This is why companies like Barndoor are building governance for MCP-connected agents. Barndoor sits between your AI agents and your MCP servers, evaluating every tool request against your business policies before the agent can act. Schedule a live demo to see how Barndoor can help you enforce and secure your AI agents.